ChatGPT is AMAZING! However, it does not think. It is using a mathematical probability to determine what word is most likely to come next. It wants to answer your question rather than be accurate. Use ChatGPT, however analysis and fact checking are still essential! ChatGPT is not thinking.

“Oh, ChatGPT told me…”

Kindly remember, AI did not “tell you,” it mathematically predicted…

Creating a Themed Activity

I was helping a friend with a Harry Potter themed Quizizz for rounding. I asked ChatGPT to create an outline of the lesson for Quizizz. Off to a good start with a suggested headline of “Harry Potter and the Chamber of Rounding.” I used that. While I am familiar with the books and the movies I would not have been able to come up with some of the gems that ChatGPT quickly produced.

“Hello young wizards and witches! Today, we are entering the magical world of numbers, a key to casting perfect spells. Our goal? To master the art of rounding whole numbers to various place values! So grab your wands, hop onto your broomsticks, and let’s begin!”

Always Argue with ChatGPT

Very rarely am I completely happy with the initial output of ChatGPT, even if I am impressed.

This Isn’t Accurate

In the response to my prompt about Snape, from Harry Potter, asking about being close to 57 unicorn tears, ChatGPT gave contradicting statements. We are going to be “Close” but “without the luxury of wasting a single tear.” That doesn’t make sense in the case of rounding. Rounding is about NOT being precise.

So I argue with ChatGPT again:

I told ChatGPT to Round UP

Now The Conversation is Round Up

When you continue your chat in ChatGPT it is remembering the previous inputs. I have accidentally trained ChatGPT that this conversation is about UP.

Later in the chat it correctly identified rounding to the hundreds place by looking at the tens place and needing to round DOWN. However, this did not go along with that the chat is about rounding UP and we needed to round UP.

ChatGPT just hallucinated how to make the answer round UP even though that is false.

ChatGPT is Your Sidekick

Please always remember that ChatGPT does not “know” and it is not smarter than you. ChatGPT is your sidekick and can help you out, and truly it was very helpful! However, I had to engage in a lengthy conversation to manipulate the output to what I needed. I also needed to analyze the results and filter out what was false and manually make changes for what I needed.

Harry Potter and the Chamber of Rounding

I spent more time trying to get ChatGPT to give me a themed story and making the graphics in Canva than I did carefully planning the output. When I have time later I will redo this to be more user friendly to 4th graders. It has too much text and I have a bunch of other complaints for myself. However, if you’re interested in my first draft you can find it here.

ChatGPT is not thinking

I asked ChatGPT to explain how ChatGPT is not thinking. Here is what it said:

ChatGPT is not Thinking: Understanding the Limitations of Language Models

Artificial intelligence (AI) has come a long way in the last few years. With advancements in machine learning and natural language processing, chatbots and conversational agents like ChatGPT are increasingly capable of producing human-like text. Some people even mistake ChatGPT’s responses for those of a human. But it’s essential to understand that ChatGPT, despite its sophistication, is not “thinking” in the way humans do. This blog post aims to explore the underlying mechanics of how ChatGPT responds to prompts and why it can sometimes provide conflicting or false information.

How Does ChatGPT Work?

At its core, ChatGPT is a machine learning model that has been trained on a large dataset of text. The underlying architecture, known as GPT-4 (Generative Pre-trained Transformer 4), uses complex mathematical operations to generate text based on the text it has seen during training. When given a prompt, it predicts the most likely next word or sequence of words to complete the sentence or paragraph. It doesn’t “understand” the text in the way humans do but uses statistical correlations in the data to generate plausible-sounding responses.

It’s All About Pattern Matching

When ChatGPT receives a prompt, it’s essentially performing a high-level form of pattern matching. It looks at the input text and computes the probabilities of various next words based on its training data. Then, it selects the word with the highest probability and continues this process until it generates a complete response.

Why ChatGPT Can Give Conflicting Information

One of the limitations of this pattern-matching approach is that the model has no internal consistency check or real-world knowledge. It can’t remember past conversations or even past sentences within the same conversation. This absence of “context memory” means that if you ask ChatGPT a series of questions that require consistent reasoning, it could give answers that contradict one another. This is not because it’s “confused” or “making a mistake”—it’s simply because each prompt is treated as an isolated task for generating a plausible response.

The Risk of False Information

ChatGPT can sometimes provide incorrect or misleading information. This occurs because the model doesn’t actually “know” anything.

It’s merely regurgitating patterns it has seen in its training data.

If the training data contains inaccuracies or if the model misinterprets the statistical correlations in the data, the output can be false. Additionally, without the ability to access real-time information (my training data only goes up to September 2021, and I can’t browse the internet), ChatGPT can’t provide current or updated facts.

Conclusions

While it’s tempting to anthropomorphize ChatGPT and similar technologies, attributing human-like qualities such as “thinking” or “understanding” can be misleading. These systems are incredibly powerful tools for generating text based on patterns in data, but they lack the reasoning abilities, emotional understanding, and consciousness that define human thought. Users should be aware of these limitations, especially when using ChatGPT for tasks that require accurate or consistent information.

Understanding that ChatGPT is not actually “thinking” can help us better appreciate what it can do, while being cautious about relying too heavily on its generated text for critical or factual information.

- Sharing a Folder in Google Drive

- Cleaning Up ChatGPT in a Google Doc

- Robot: Write Me an Article on Teaching with Google Slides

- Leveraging ChatGPT to Support Students

- Why we Need to Talk About Leslie Jones in Class

-

Create a Custom Infographic

App Smash NotebookLM and Canva to create custom Infographics.

-

How Google Workspace AI Agents Transform Teacher Workflows

What is an Agent? It is an AI action that you don’t have to take. As teachers… we need more helpers doing things for us.

-

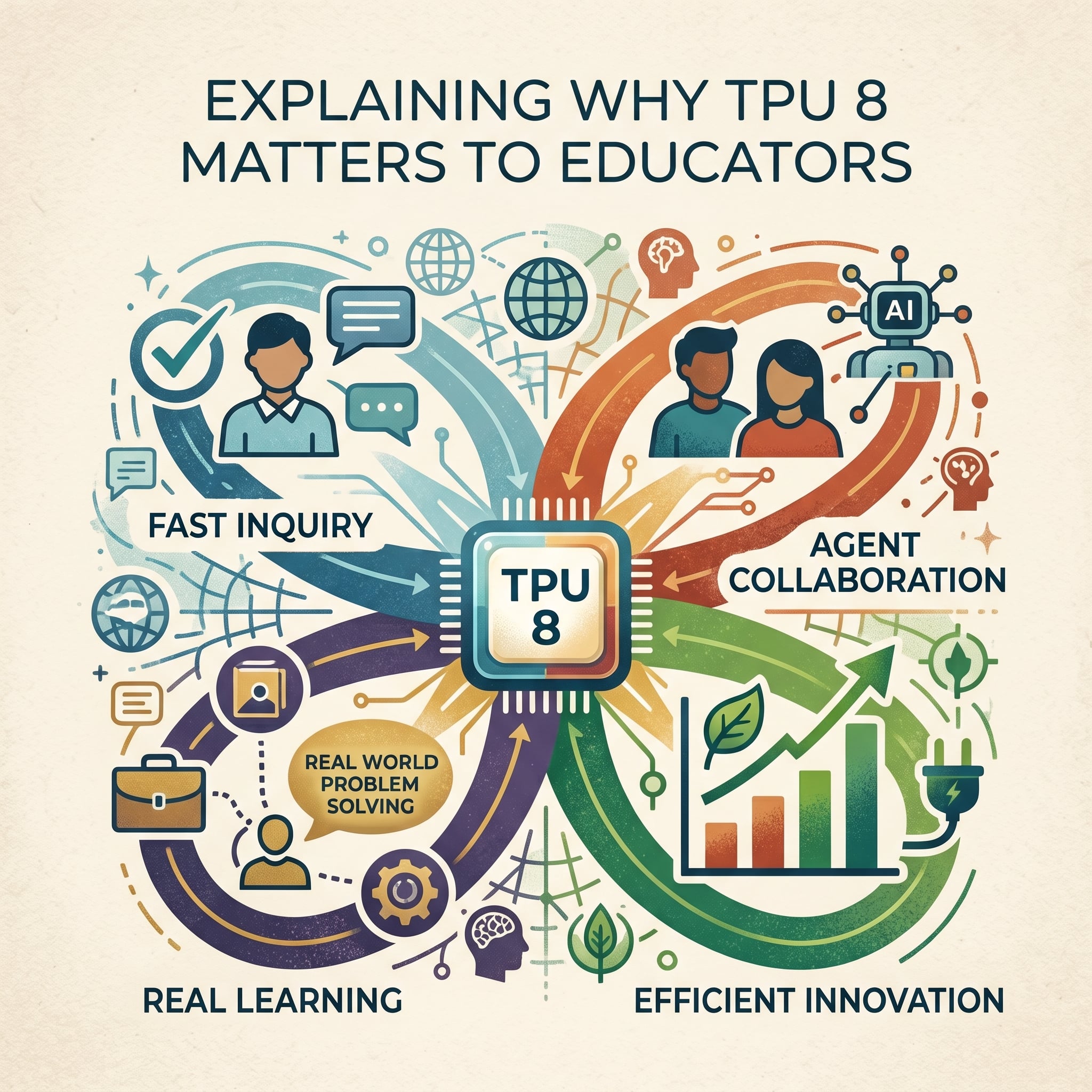

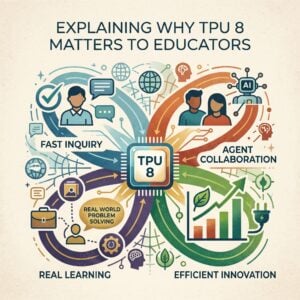

Why Google’s TPUs 8 Matters to Educators

Google announced new TPU chips at Google Cloud NEXT 26. What does this mean for education?

-

Using Gemini to Export Google Docs to Markdown

Markdown uses simple text symbols to keep your formatting portable and clean across any digital platform. Gemini can help by accurately translating your collaborative drafts into this universal format, ensuring your content is ready for the web in seconds.

-

5 Amazing Features of NotebookLM

Google NotebookLM is AI grounded in the sources you provide it. It makes for a much more focused and accurate result for teachers and students.

-

Analyzing Hundreds of Student Comments in Record Time

AI is built into everything, including Google Sheets. However, how do you easily utilize AI when building spreadsheets, and particularly when you are reviewing student